A close friend of mine who also has been, like me, until recently working on MS-BI all through his career has moved on to different stream for performing ETL i.e. using of Informatica.

Having been relatively new to Informatica (with only couple of years and few projects here and there), we had many a arguments on which is better and why. As I stated out in my first post about Informatica, I didn’t have good feelers with it when starting out. There were too many IDE’s to grapple with, transformations do not look intuitive, simple tasks look very hard to perform etc. One of the arguments he did make is Informatica is a market leader and is lot more powerful owing to it’s code re-usability, out-of-the-box connectivity across varied sources, error handling etc and SSIS is doing now a lot of catch-up.

I decided to dig deep and validate my own findings on the ‘out-of-the-box connectivity and error handling’. This is one of such posts and more to come.

Task –

Load million records from a source table to a destination table. Out of the million records, there are about 10 error records. Source Table would be a constant. There would be two runs – first run with table in Oracle database as destination, second run with table in a SQL Server database as the destination.

I will use both SSIS and Informatica to accomplish this task and see how best both accomplish the task.

Setting up of Source –

I have Oracle 11g express set-up on the machine. My source table is simple table consisting of Id, Name columns with about a million records in it. Id is basically a auto-incrementing column. After it’s populated I am running a script to update 10 random records to null. Below SQL code performs this –

--Source table to hold the million records create table SrcEmployeeData ( Id int ,Name varchar(50) ); --Insert million records in it. The value level auto-increments insert into SrcEmployeeData select level, 'test' from dual connect by level <= 1000000; --Update 10 random records with Name as NULL update SrcEmployeeData set Name = null where Id in ( select Id from ( select * from SrcEmployeeData order by DBMS_RANDOM.VALUE ) where ROWNUM <=10 ); --Commit the data commit;

Once done, confirm to check there are 1 million records with about 10 records having NULL in Name column.

Task 1 – Tool – Informatica, Destination – Oracle

Here is the definition of destination table in Oracle

create table HR.EmployeeData(

Id int

,Name varchar(50) not null

);

I created a mapping called m_Load_EmployeeData_Oracle which contains just two transformations – Source and Destination as shown below –

I then developed a workflow for it wf_m_Load_EmployeeData_Oracle. I then set the Connections in the ‘Config’ section of the session as appropriate and started the workflow. Here is the result –

So right-off the bat, without any tweaking, the workflow got successfully executed and correctly captured the 10 error records with a execution time of 1m 33sec.

Task 2 – Tool – Informatica, Destination – SQL Server

I created destination table in SQL Server with same table definition as in Task 1. I then created a new mapping m_Load_EmployeeData_SQL with same mapping i.e. source connected to destination as shown below –

Created the wofklow wf_Load_EmployeeData_SQL followed by setting appropriate ‘Connections’ in the session properties. Ran the workflow and here is the result –

10 records successfully captured without any ado and in the same time. Absolutely no difference in time.

Task 3 – Tool – SSIS, Destination – SQL Server

Source still remains the same. Here comes the real kicker even before we get into comparison. If we set-up the DFT with a simple ‘OLE DB Source’ and ‘OLE DB Destination’ components and start the package, it is bound to fail as shown below –

Out-of-the box error capturing just doesn’t exist. Here is what I have done to capture the error records using the 3 DFT technique. This is how it works. Create three copies of your ‘OLE DB Destinations’. Connect your source to the first DFT. Set the properties ‘Rows per batch’ and ‘Maximum insert commit size’ values to 50000 as shown below –

Under mappings, the columns would then be auto-mapped by name. Connect the ‘Error Output’ of the first DFT to the second DFT copy. Set the two values for that as 10000. Connect this second DFT’s error re-direction to the third DFT copy. For the final one set, the two values as 1. Rename the tasks appropriately.

Now drag in another DFT, and plug in the third copy of the DFT error output on to this new one. This time we are going to create a new destination table for capturing the error say ‘dbo.EmployeeData_Error’. The table definition is as listed below –

CREATE TABLE [dbo].[EmployeeData_Error]( [ID] [numeric](38, 0) NULL, [NAME] [nvarchar](50) NULL, [ErrorCode] [int] NULL, [ErrorColumn] [int] NULL, [DateInserted] [datetime] NULL ) ON [PRIMARY] GO ALTER TABLE [dbo].[EmployeeData_Error] ADD CONSTRAINT [DF_EmployeeData_Error_DateInserted] DEFAULT (getdate()) FOR [DateInserted] GO

At the mapping level, ensure to connect the ErrorCode and ErrorColumn of the third copy of DFT wherein you are setting both values to 1, to the same columns of the table EmployeeData_Error as shown below –

With this being set-up, the package is executed with no problem whatsoever as shown below-

Looks hale and hearty. How about the speed of execution –

It completed in just 14 seconds. The fastest so far in the tasks we performed. Now let’s go to the final task.

Task 4 – Tool – SSIS, Destination – Oracle

Before we get into the package set-up, one needs to make sure the Oracle Provider for OLE DB is present. If not, get the latest stable version from the following link. The package set-up would be very simple this time around. In total there would be three tasks – OLE DB Source for fetching the data, 2 OLE DB Destinations (1 for the destination and the other for capturing the error).

The OLE DB Destination for storing the target data will need to have the ‘Data access mode’ set to – ‘Table or View’ and one cannot use ‘Fast Load’ option. Below image shows the set-up that needs to be done –

With that set, here is the snapshot of the package run –

And the execution speed –

Almost an Hour for just a million records. One whole hour for a dataset containing only two columns. I had a job wherein I was transferring about 2 million records from a much larger table and we had similar requirement to capture the error records. That package ran for almost 3 hours to complete. Anything that is not set to SQL Server as destination, SSIS performs horribly.

Conclusion-

SSIS offers very poor performance for data loads which differs from SQL Server. If you want more robustness, one needs to look outside for any third-party tool. Informatica on other hand just doesn’t care what the source and destinations are. It just works as expected giving uniform performance.

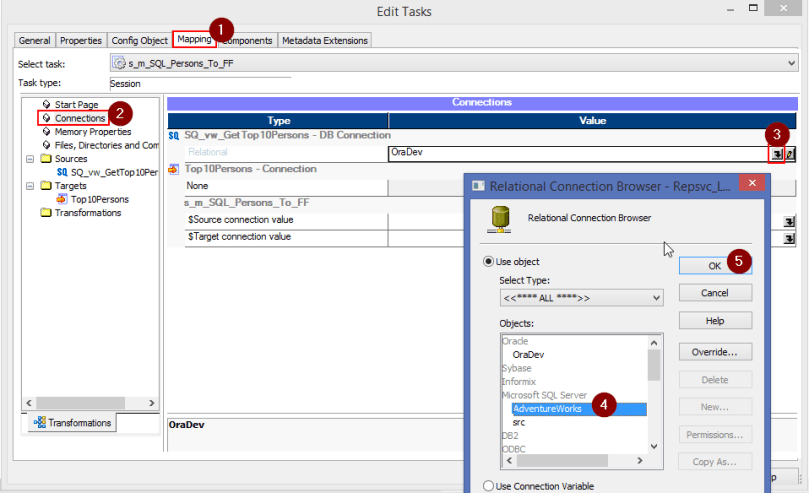

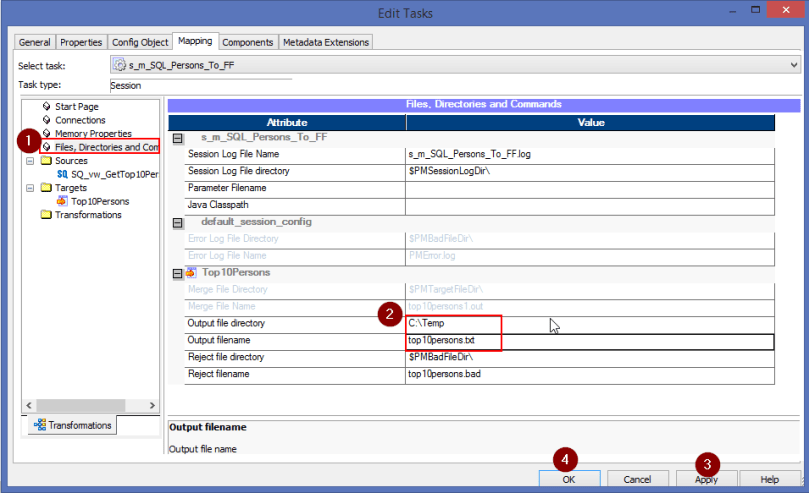

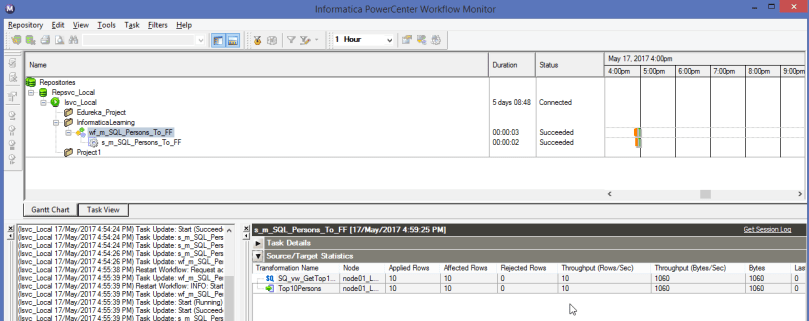

Ensure to change the default database to the actual Database from which you are sourcing the data which in this case is – AdventureWorks2014

Ensure to change the default database to the actual Database from which you are sourcing the data which in this case is – AdventureWorks2014