As they say there is first time for everything. Having worked on so many packages all through my career never once I had a need to import a flat file containing dates directly from SQL Server Import and Export Wizard. It also may be a case of not having the data type set as ‘Date’ in the column and rather take it as a varchar value when doing imports.

So today as part of some task I had a requirement to import data with some date columns in it. Let’s say data for my column data looks like this in a file called DateTest.txt. Note that there is a row with blank data –

StartDate,EndDate 2017-01-01,2017-01-01 2017-05-01,2017-05-01

So I opened up the SQL Server Import Wizard, set the Data Source as – Flat File Source and browsed and obtained the File as shown below –

Now go to Advanced and set the properties for both the columns StartDate and EndDate as ‘DT_DBDATE’ which translates to date datatype of SQL Server. For more info refer to – link. The screenshot below is for ‘EndDate’. Do the same for StartDate column as well.

Set the Destination to your local Database say RnD as in my case as shown below –

In the ‘Select Source Tables and Views’, leave it as is and click on ‘Next>’ (This will create the table by default). Leave the defaults as is in the ‘Save and Run Package’ screen, leave the defaults and click on ‘Next>’. Click on Finish in the last page of the wizard. You will see the ‘Operation Stopped’ with ‘Copying to [dbo].[DateTest]’ set to error as shown below.

If you dig further in the Messages, here is what it throws up the following error-

The error states –

An OLE DB record is available. Source: “Microsoft OLE DB Provider for SQL Server” Hresult: 0x80004005 Description: “Conversion failed when converting date and/or time from character string.”.

(SQL Server Import and Export Wizard)

The blank values are treated as strings and that is what the error states.

Solution 1 –

Instead of setting the data type as ‘DT_DBDATE’ set is as ‘DT_DATE’ then it will pass. There are two side-effects to this –

- The destination column would be of type datetime instead of date.

- All the blank values will be set as ‘1899-12-30 00:00:00.000’ as can be seen below

It’s not a optimal solution. If you are just importing one file with limited columns of such type and if the destination table on which are you are importing isn’t a large table then we can go with this approach. Depending on the use case, one can then proceed to either update the values as NULL or leave it as is.

Solution 2 –

This involves creating a package out of the same operations. Now one can go about it in the traditional way i.e. open SQL Server Data Tools (Visual Studio), add new package, drag and drop a DFT, yada yada.

Instead let’s replicate the same behavior as before. How you may say. Did you know that one can fire up a ‘SQL Server Import Wizard’ from SQL Server Data Tools itself? Now before we go further, if you have been following along, the table ‘dbo.DateTest’ in your destination should be existing.

Open a SSIS Project and go to ‘PROJECT’->’SSIS Import and Export Wizard…’ as shown below –

The wizard looks exactly the same as fired from SQL Server. At the end you will notice the difference. Follow the same steps that you have done earlier i.e. by setting the data as ‘DT_DBDATE’. Instead of executing, it creates a package and the final window would look like this. It will create a new package called Package1.dtsx if there isn’t one already. If there is one it would create Package2.dtsx. –

At this point if you run the package that gets generated automatically as is you will get the same error. Here are the changes that are to be done.

Open the Control Flow Task – ‘Data Flow Task 1’. In the Data Flow task, open the task ‘ Source – DataTest_txt’. Ensure that ‘Retain null values from the sources as null values in the data flow’ as shown below –

Secondly double-click on the ‘SourceConnectionFlatFile’ connection manager, go to Advanced and modify the data types of StartDate and EndDate to DT_STR and length 10. Below image is shown for EndDate. Do it for StartDate as well.

Since the source connection manager is changed, the DFT – ‘Source-DataText_txt’ needs a change. Double-click on the DFT and you will be presented with the changes as shown below. Accept them.

Delete the connector between Flat File and the OLE DB Destination and drag in a Derived Transform in between them. Add the following two expressions as shown below –

- Derived Column Name – DC_StartDate ; Expression – StartDate == “” ? NULL(DT_DBDATE) : (DT_DBDATE)StartDate

- Derived Column Name – DC_EndDate ; Expression – EndDate == “” ? NULL(DT_DBDATE) : (DT_DBDATE)EndDate

Connect the Derived Transform output to the OLE DB Destination and set the mappings with the newly transformed columns.

In addition to that set the ‘Keep Nulls’ property to yes.

That’s it. Now execute the package. All the three records would now get successfully

Data gets transferred with the blank values retained as NULL values as shown below –

To summarize the solution, basically out-of-the box Import/Export wizard will not work with getting NULL values to date columns. Here are the changes to be done –

- Set all the date columns for which you would want to retain NULL for blanks as String values

- Add a Derived Transformation to change the data type of the data to DT_DBDATE.

- Set the retain null property at both source and destination to yes.

Solution 3 –

The ideal solution should be the one wherein one can use DT_DBDATE at the source itself and it should go through to destination. For some reason I have been getting strange errors while doing it as shown below –

Error:Year, Month, and Day parameters describe an un-representable DateTime.

. I am still working on it. Once I get a better solution, will post it here.

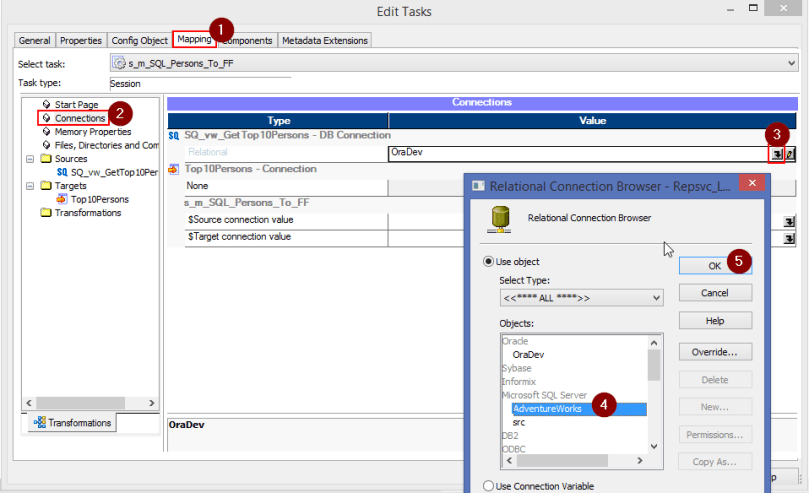

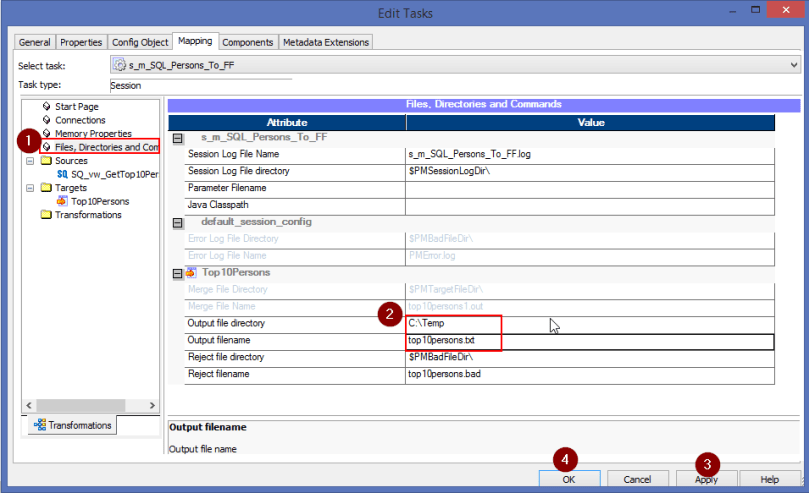

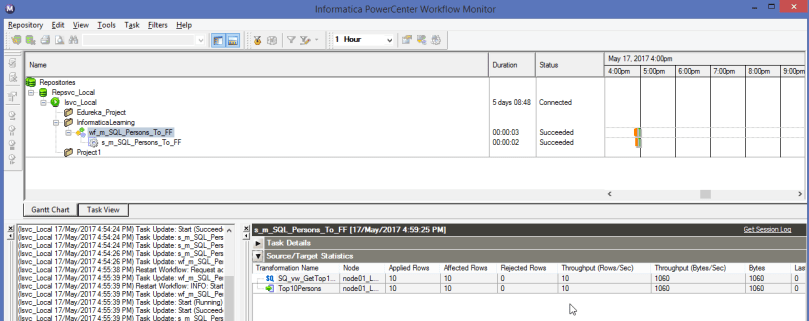

Ensure to change the default database to the actual Database from which you are sourcing the data which in this case is – AdventureWorks2014

Ensure to change the default database to the actual Database from which you are sourcing the data which in this case is – AdventureWorks2014